Agentic Programming: A Roadmap

Here is the number that defines the current state of things: <a href="https://svitla.

Here is the number that defines the current state of things: <a href="https://svitla.

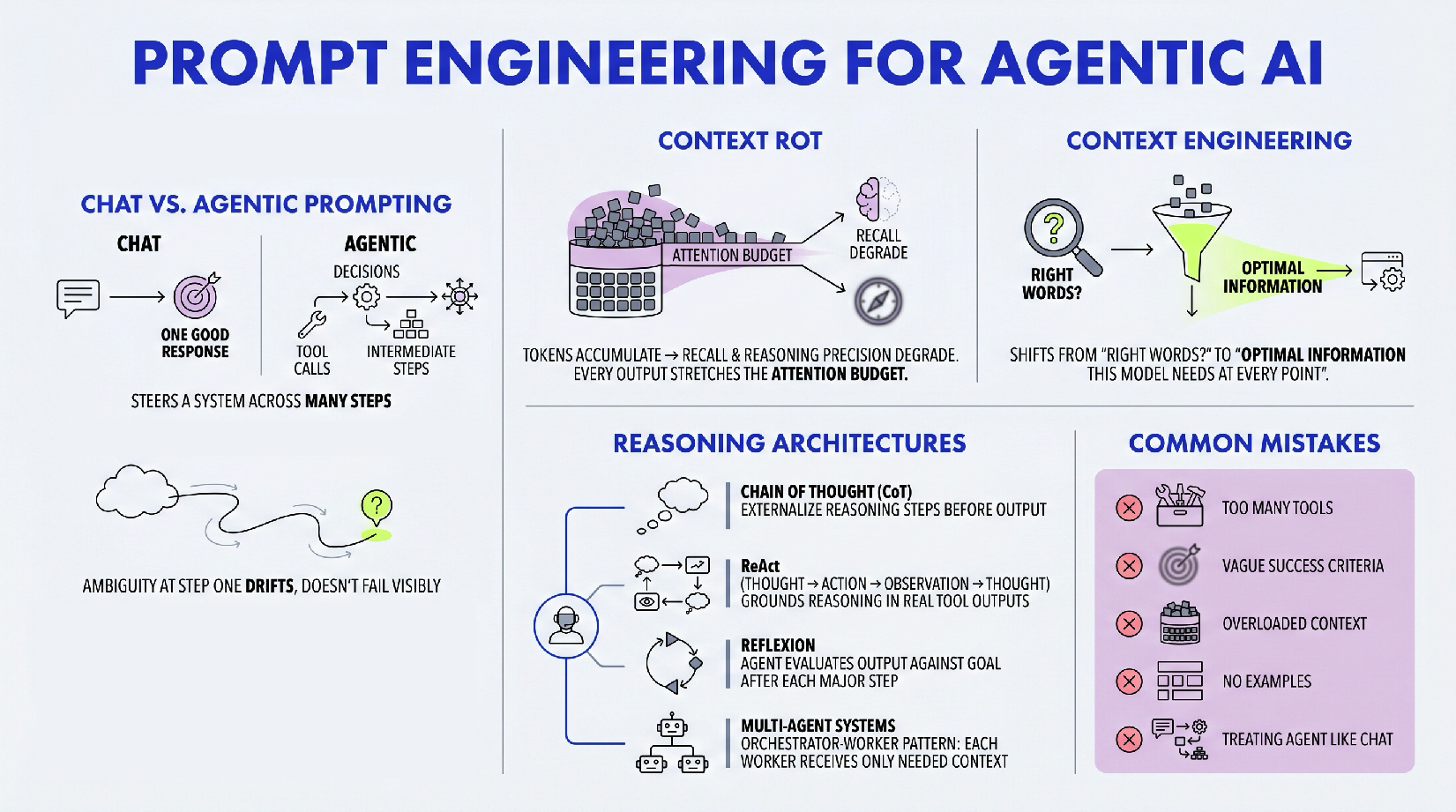

You have probably spent time learning how to prompt AI well.

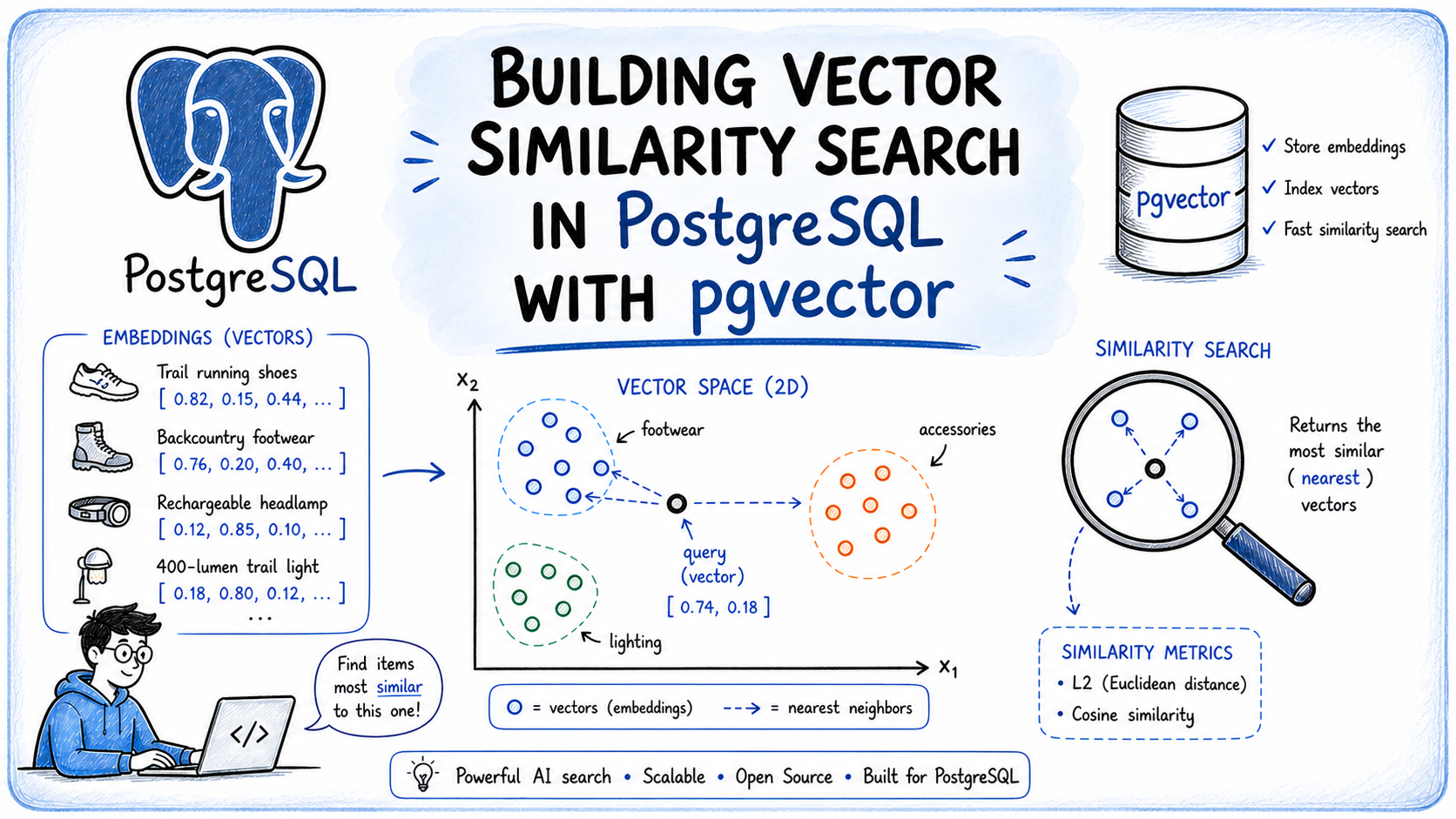

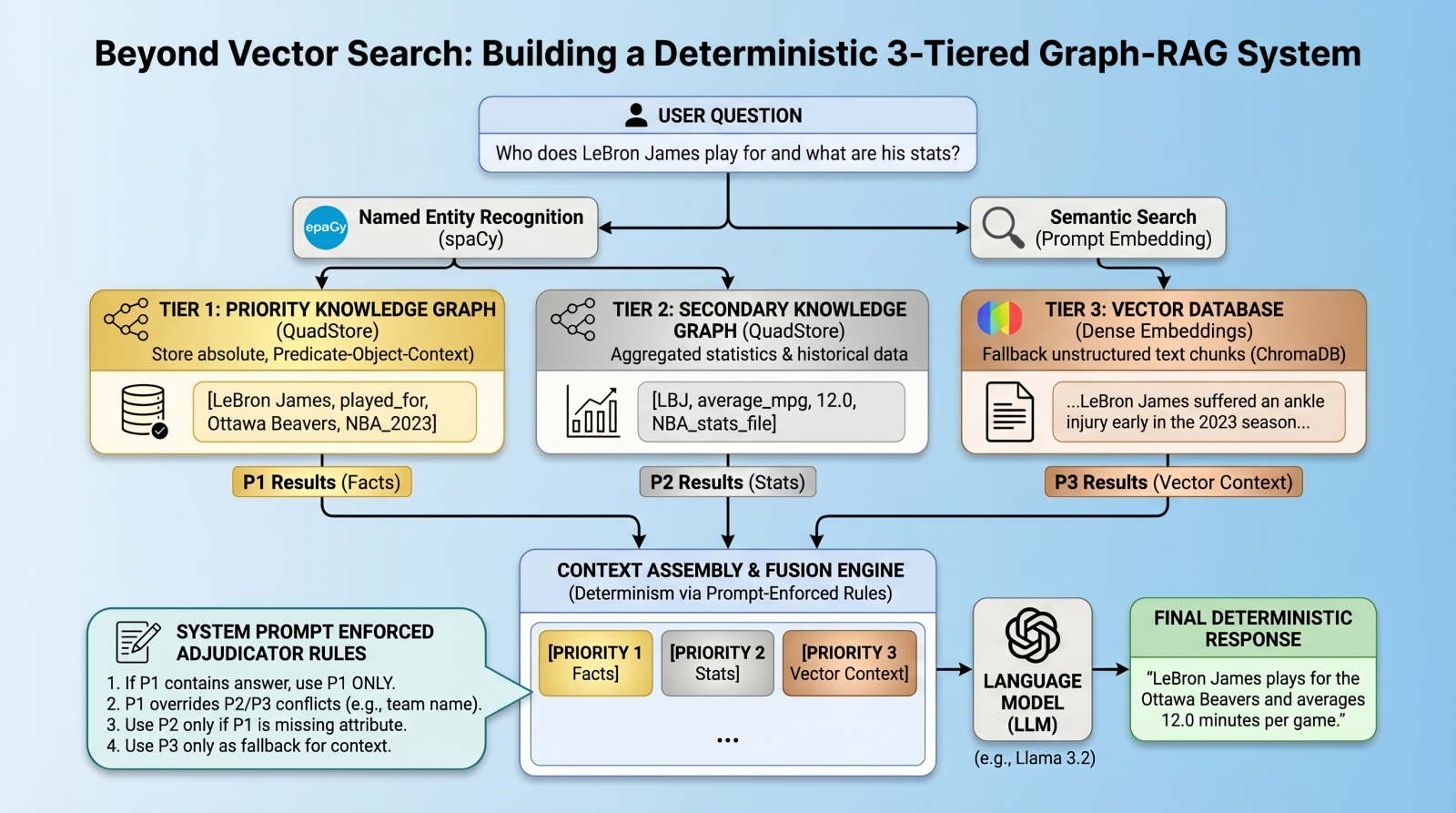

Search works well when users know exactly what they are looking for, but it breaks down when intent is described in natural language.

Most <a href="https://www.

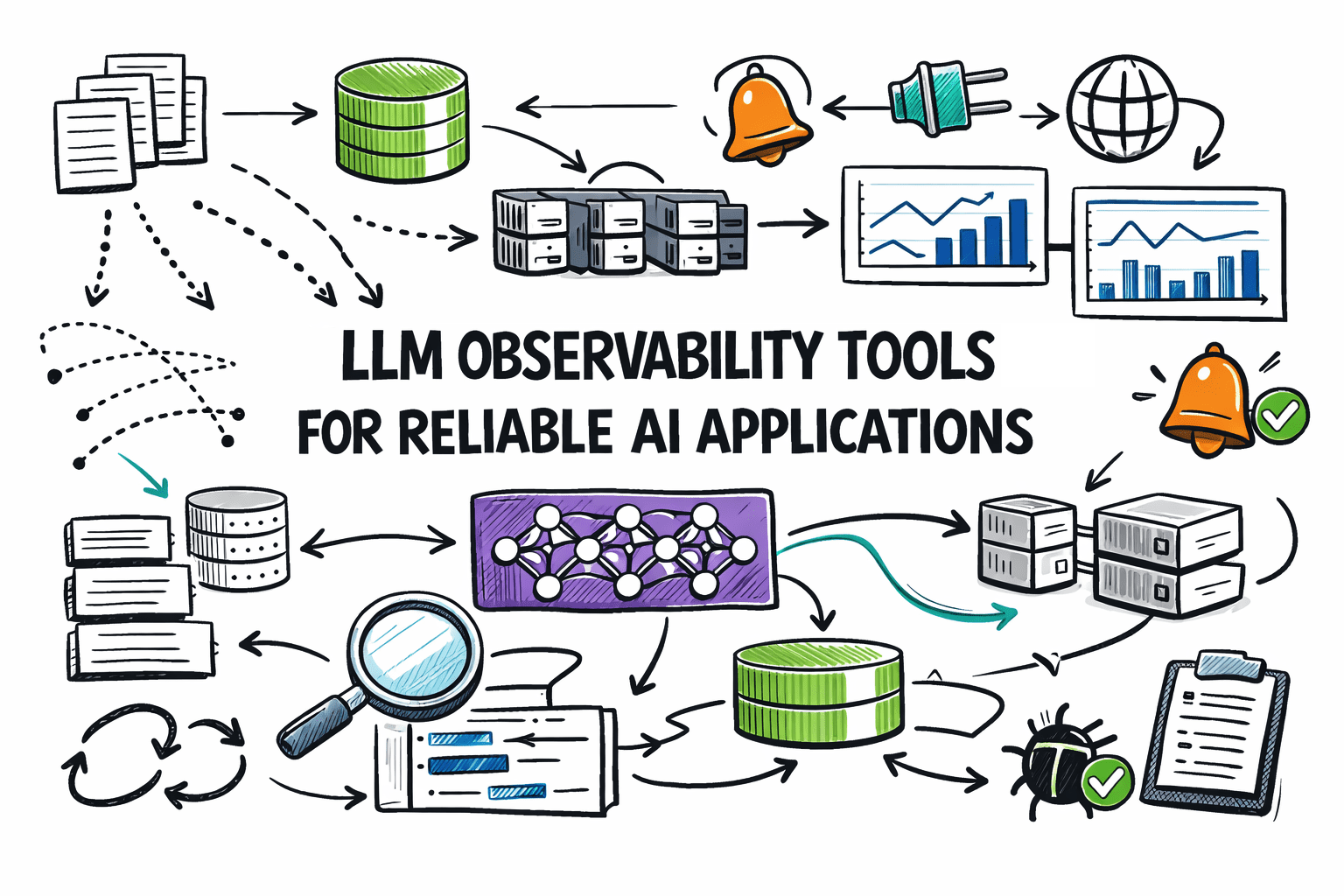

Large language models (LLMs) now power everything from customer service bots to autonomous coding agents.

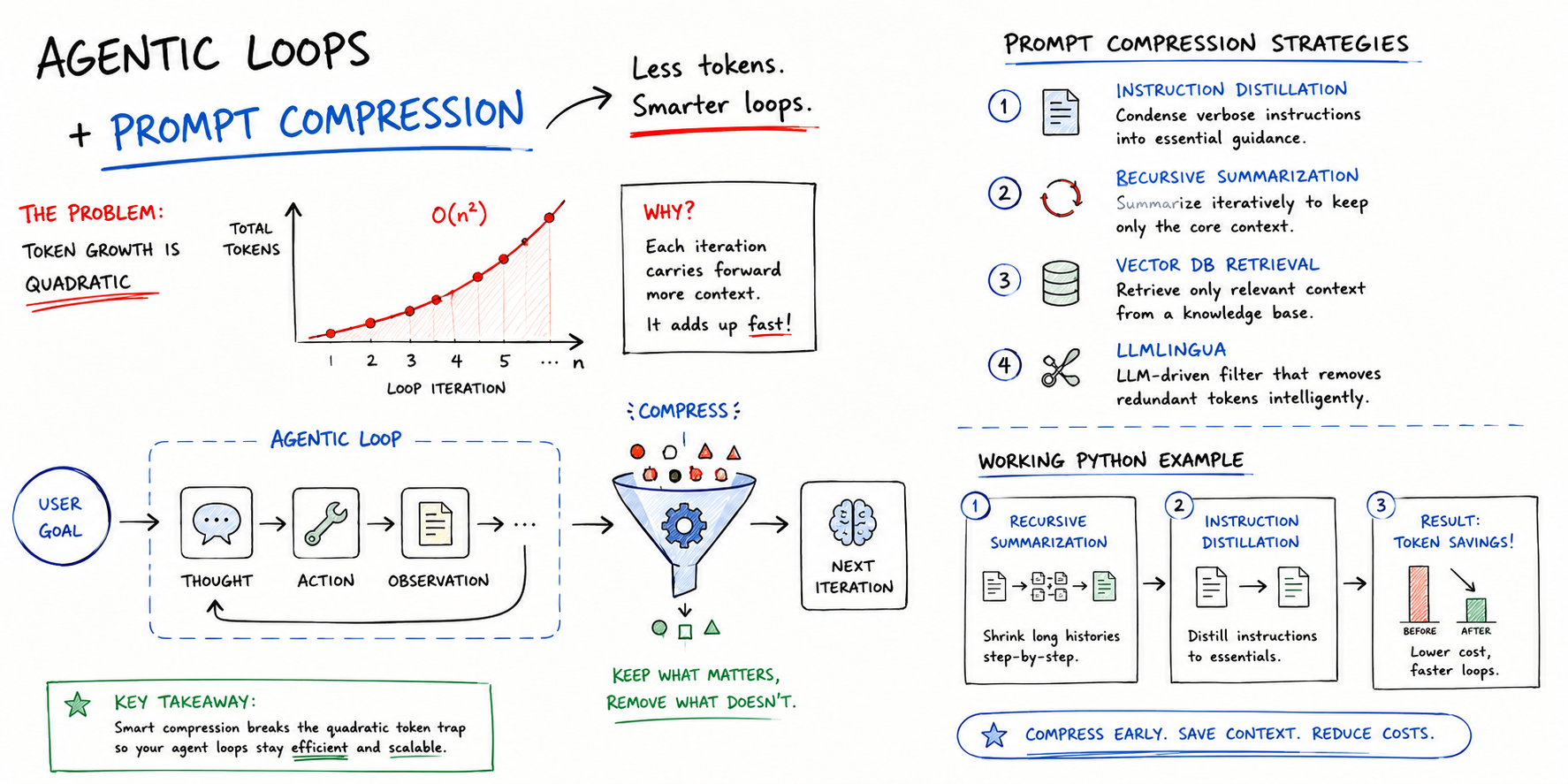

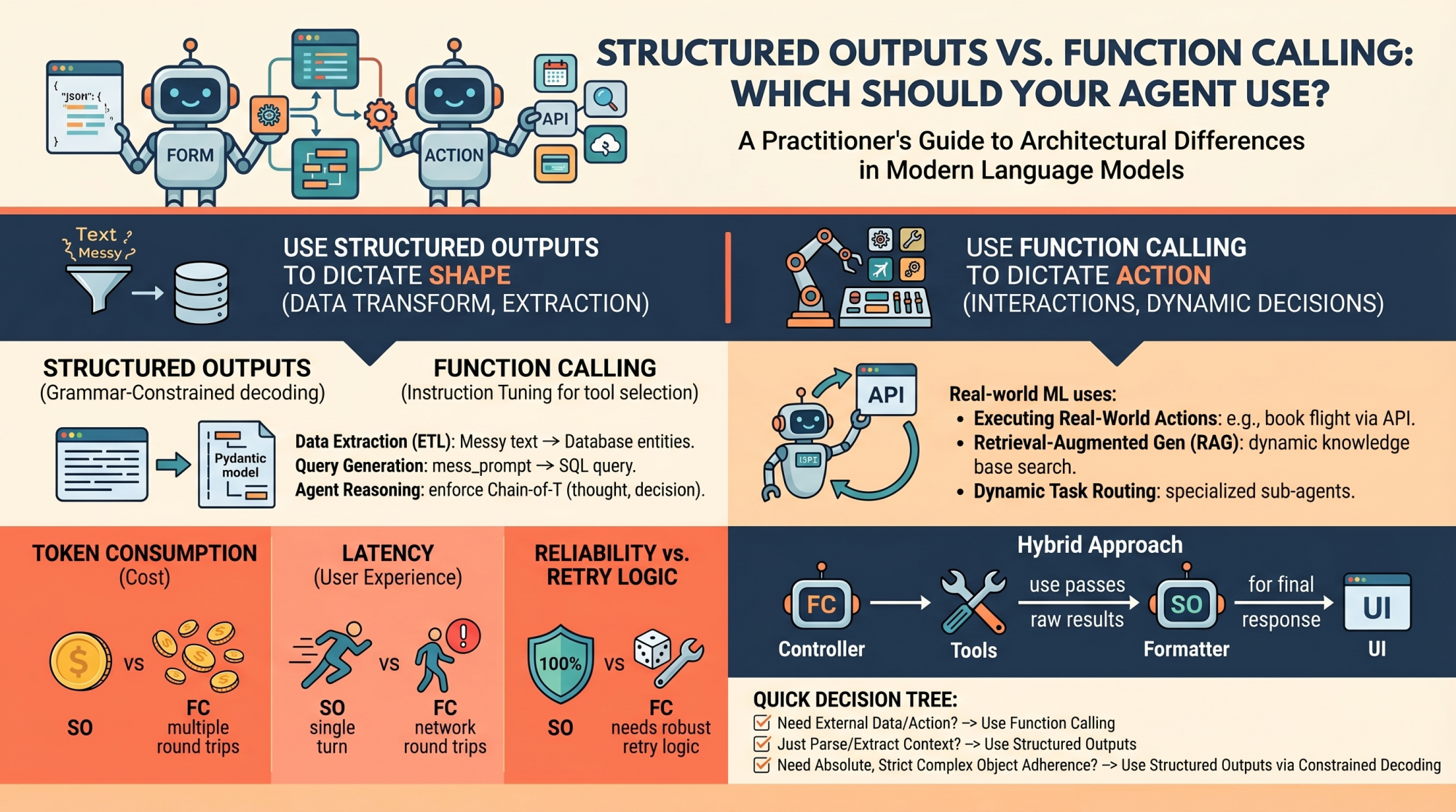

Agentic loops in production can be synonymous with high costs, especially when it comes to both LLM and external application usage via APIs, where billing is often closely related to token usage.

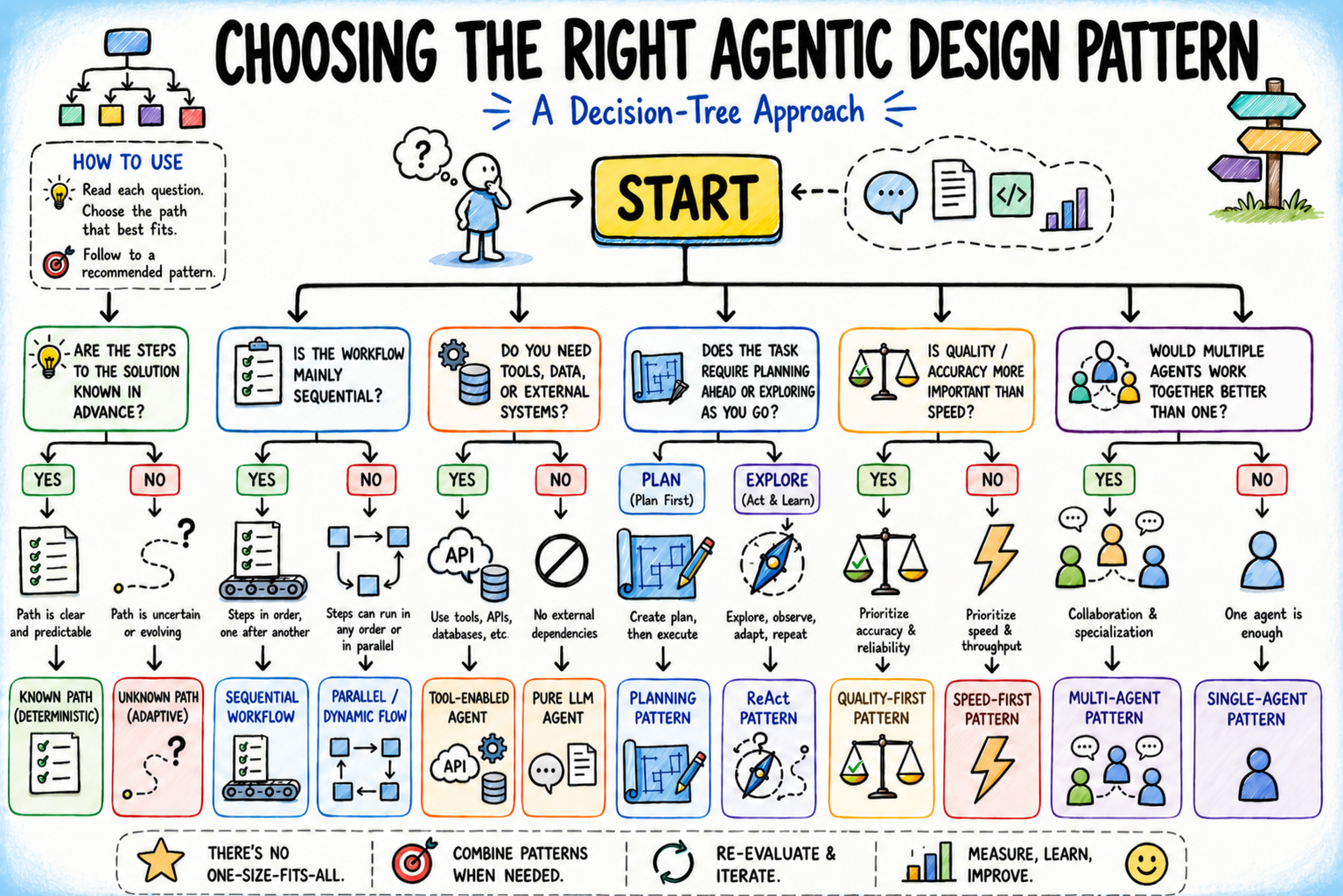

AI agents have evolved beyond passive chatbots.

Most <a href="https://www.

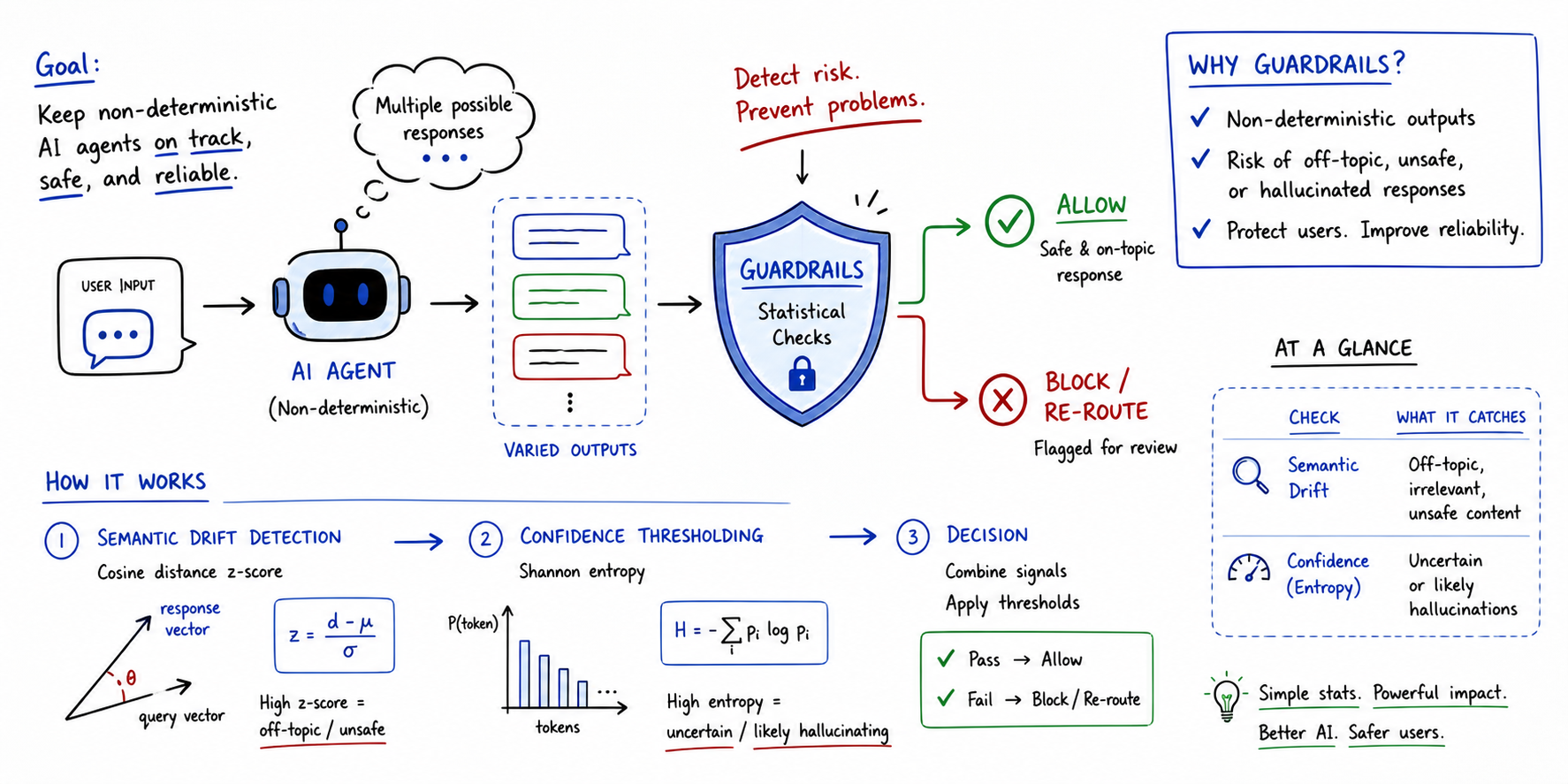

Non-deterministic agents are those where the same input can lead to distinct outputs across multiple runs.

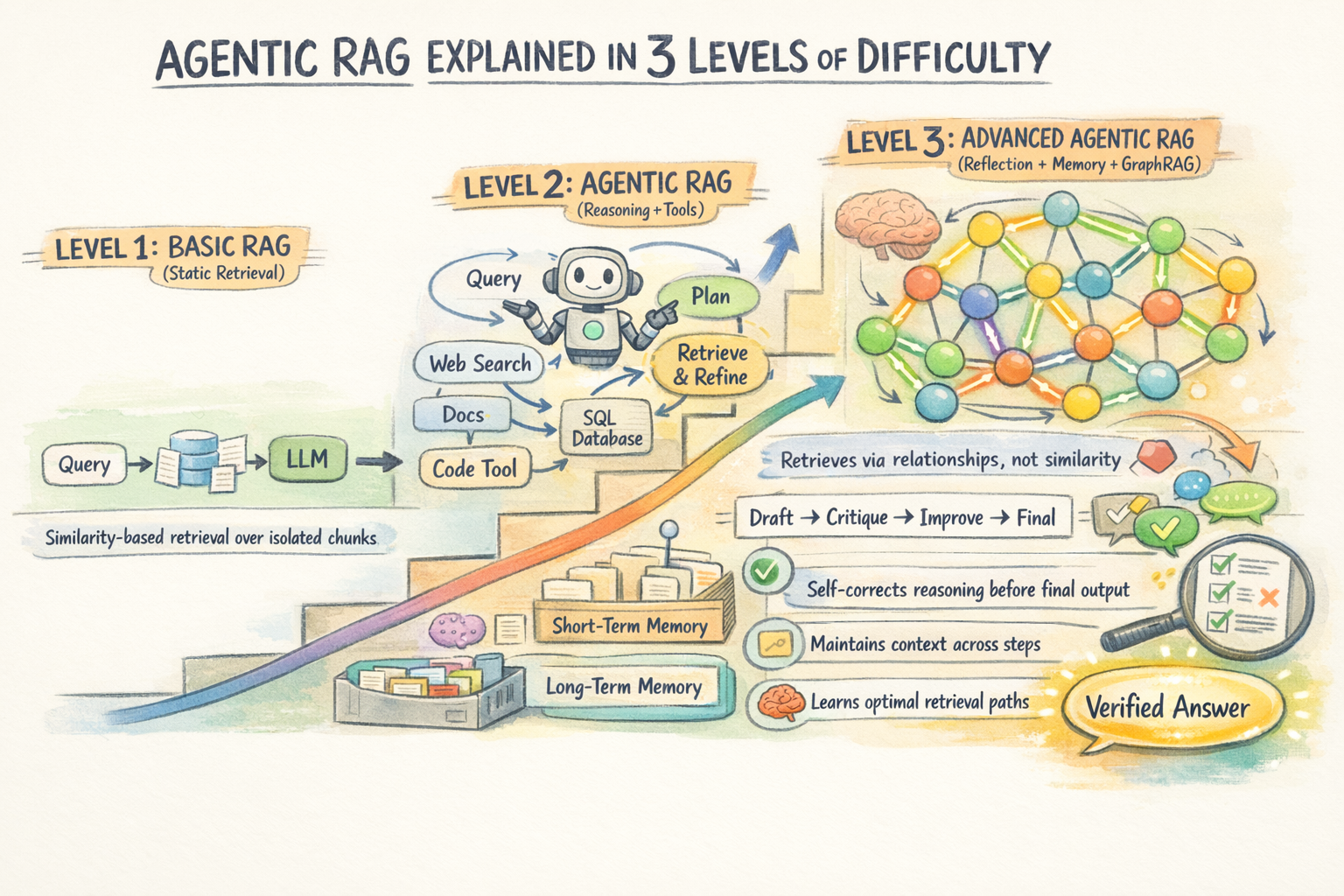

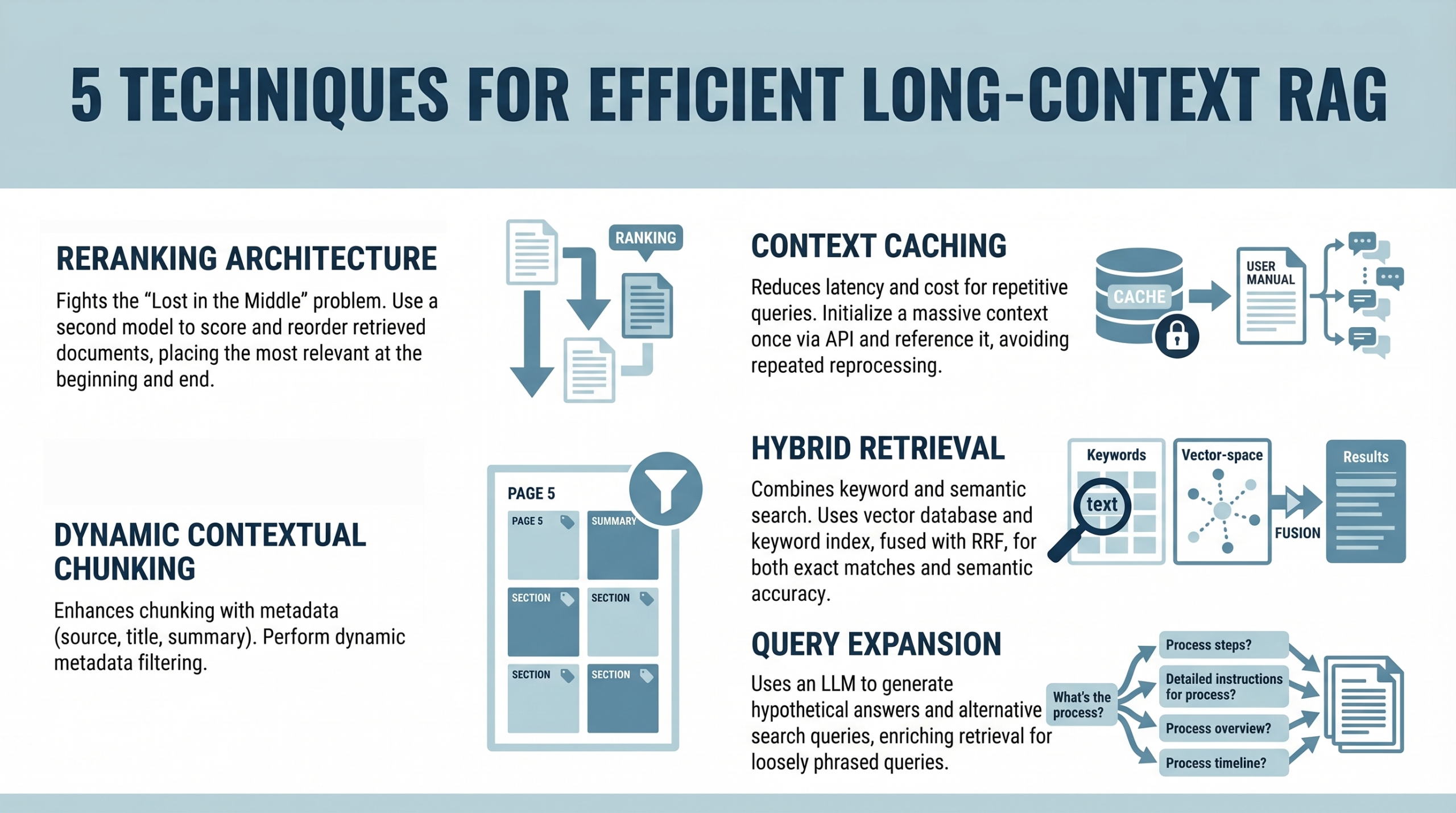

Traditional <a href="https://aws.

TurboQuant has recently been launched by Google as a novel algorithmic suite and library for applying advanced quantization and compression to large language models (LLMs) and vector search engines — an indispensable element of RAG systems.

FastAPI has become one of the most popular ways to serve machine learning models because it is lightweight, fast, and easy to use.

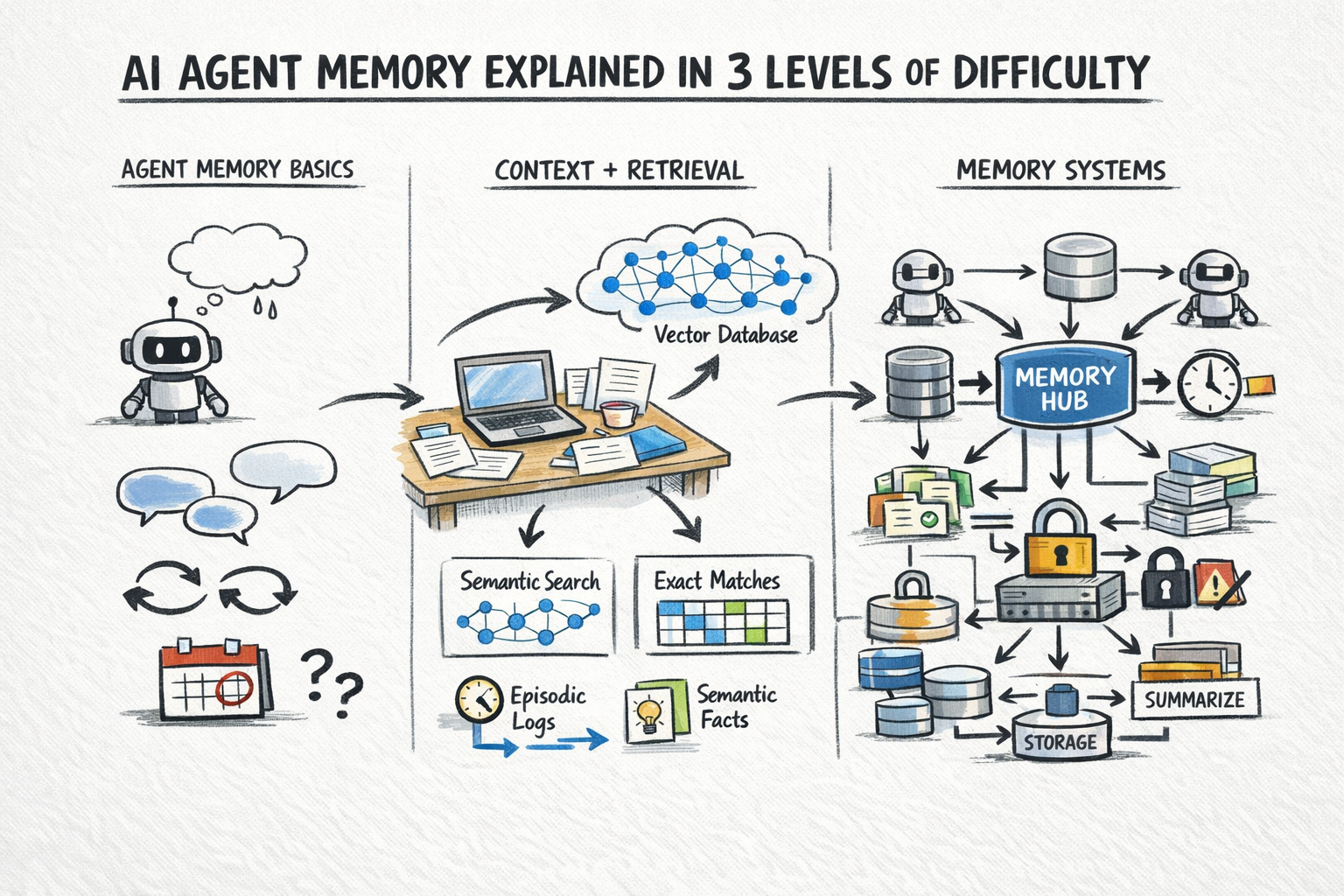

A stateless AI agent has no memory of previous calls.

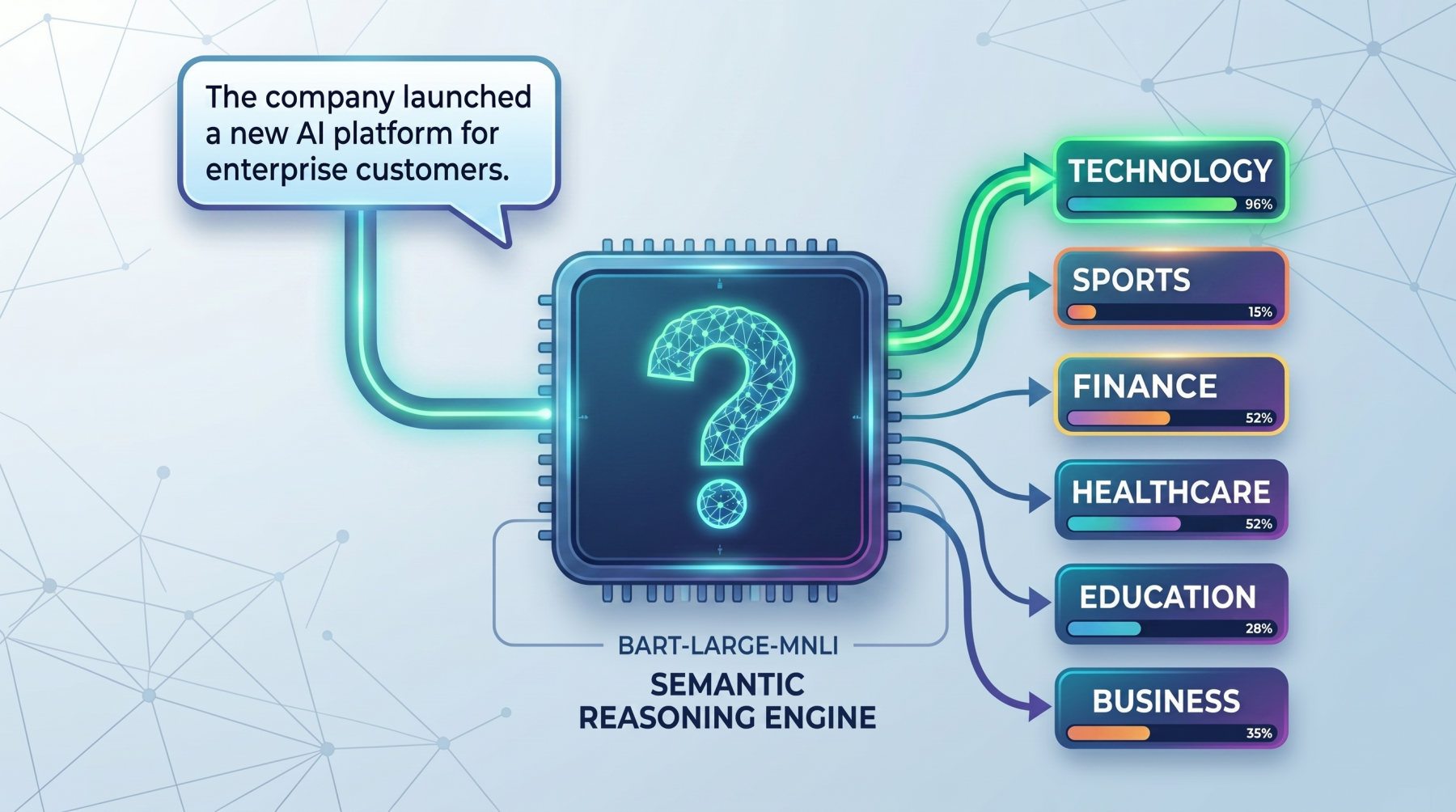

Zero-shot text classification is a way to label text without first training a classifier on your own task-specific dataset.

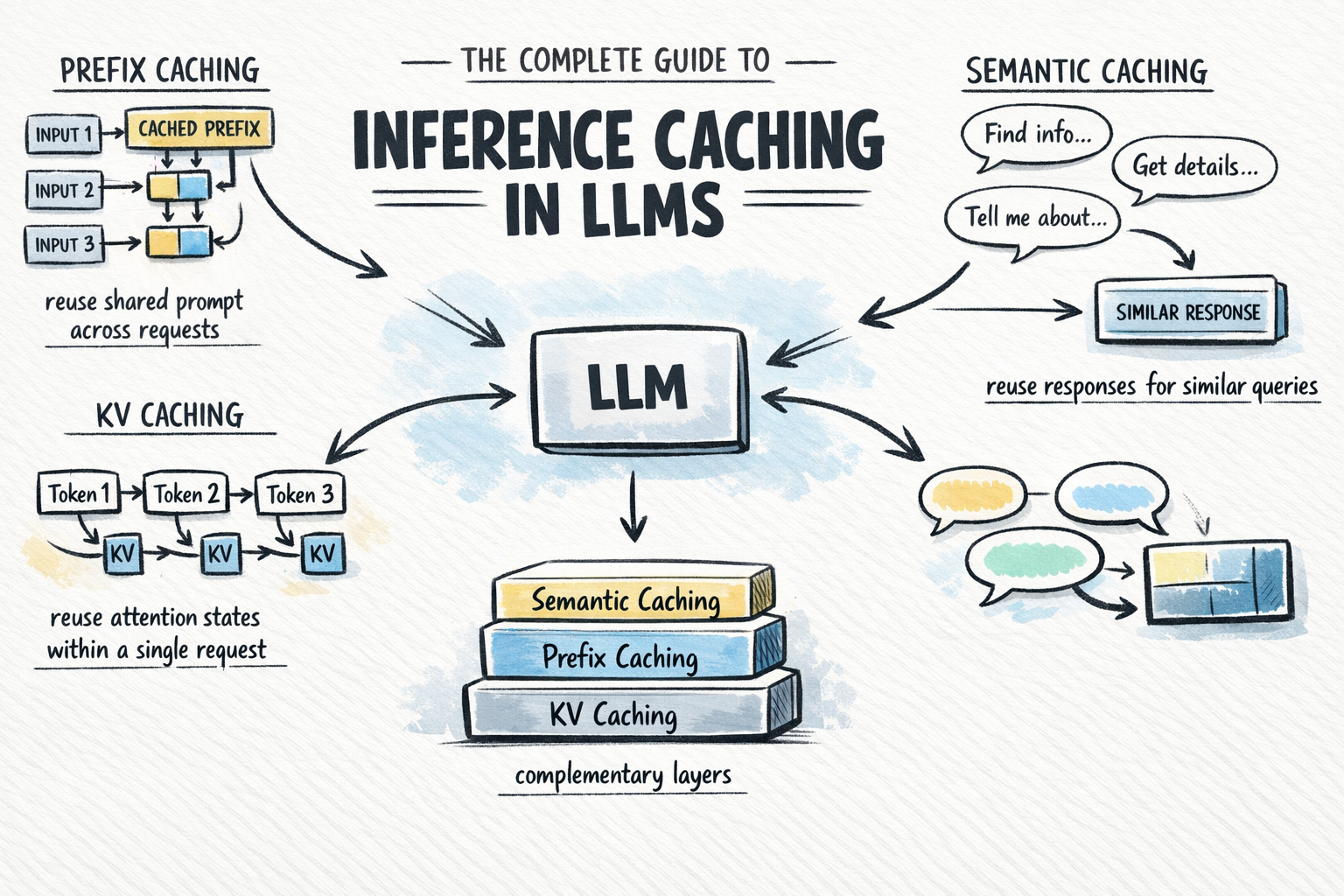

Calling a large language model API at scale is expensive and slow.

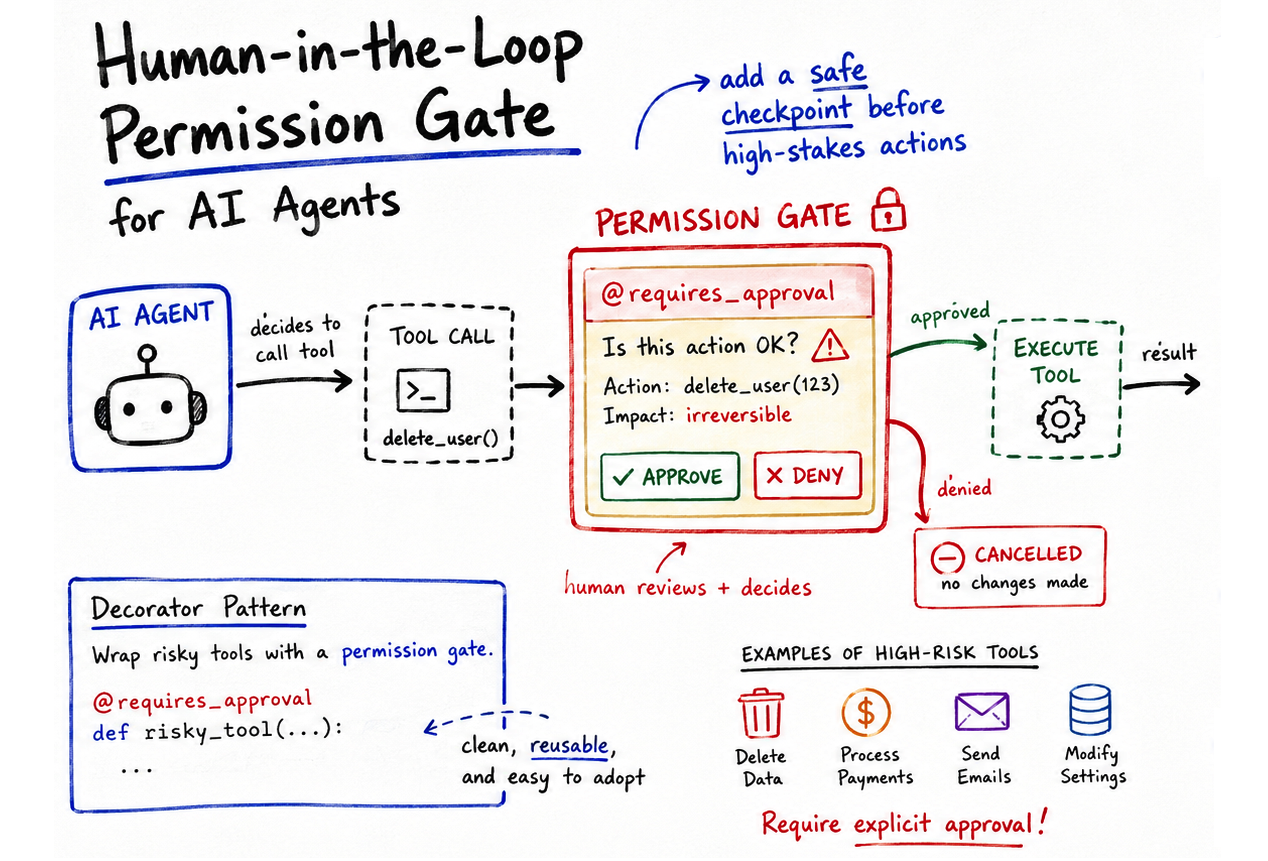

You've probably written a decorator or two in your Python career.

<a href="https://machinelearningmastery.

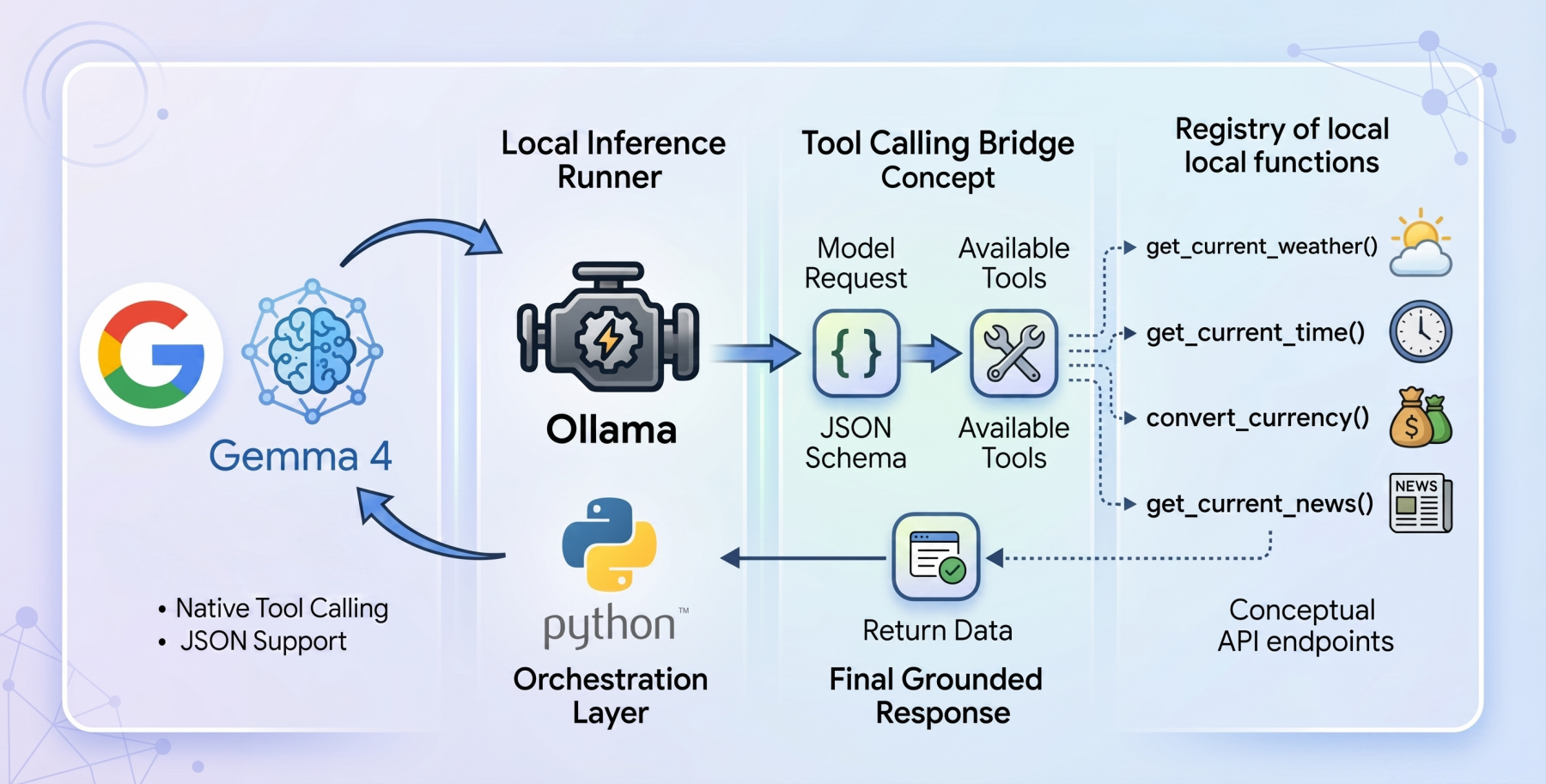

The open-weights model ecosystem shifted recently with the release of the <a href="https://blog.

Language models (LMs), at their core, are text-in and text-out systems.

<a href="https://machinelearningmastery.